Contextual Multi-Armed Bandit

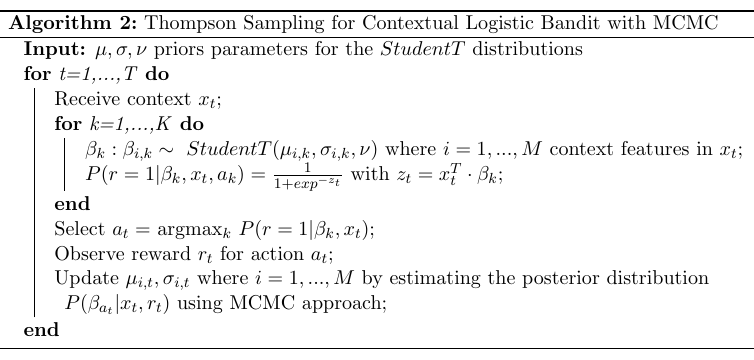

For the contextual multi-armed bandit (cMAB) when user information is available (context), we implemented a generalisation of Thompson sampling algorithm (Agrawal and Goyal, 2014) based on NumPyro.

The following notebook contains an example of usage of the class Cmab, which implements the algorithm above.

[1]:

import numpy as np

from pybandits.cmab import CmabBernoulli

from pybandits.model import BayesianNeuralNetwork, BnnLayerParams, BnnParams, FeaturesConfig, StudentTArray

/home/runner/.cache/pypoetry/virtualenvs/pybandits-vYJB-miV-py3.10/lib/python3.10/site-packages/tqdm/auto.py:21: TqdmWarning: IProgress not found. Please update jupyter and ipywidgets. See https://ipywidgets.readthedocs.io/en/stable/user_install.html

from .autonotebook import tqdm as notebook_tqdm

[2]:

n_samples = 1000

n_features = 5

First, we need to define the input context matrix \(X\) of size (\(n\_samples, n\_features\)) and the mapping of possible actions \(a_i \in A\) to their associated model.

[3]:

# context

X = 2 * np.random.random_sample((n_samples, n_features)) - 1 # random float in the interval (-1, 1)

print("X: context matrix of shape (n_samples, n_features)")

print(X[:10])

X: context matrix of shape (n_samples, n_features)

[[-0.16399088 -0.97453958 0.66492246 -0.96734812 -0.98426086]

[ 0.36162366 0.31376295 -0.77242397 -0.09694901 -0.64685443]

[-0.72191377 -0.85654125 0.71529686 -0.83985932 0.12865115]

[-0.55003773 0.21873921 0.26701932 -0.50162046 0.60458222]

[ 0.7591858 0.05091745 0.71361806 -0.82339602 -0.68299624]

[-0.03665504 -0.15688168 -0.7899587 0.85248436 0.16421691]

[-0.46126863 0.2762128 -0.99032885 -0.3319534 -0.80944934]

[-0.03754528 0.71264975 -0.85703706 0.49618389 -0.84677789]

[ 0.78621472 -0.31040366 -0.11765096 0.13790086 -0.54389918]

[ 0.23936352 0.09660428 -0.06943596 -0.4992947 0.74090185]]

[4]:

# define action model

bias = StudentTArray.cold_start(mu=1, sigma=2, shape=1)

weight = StudentTArray.cold_start(shape=(n_features, 1))

layer_params = BnnLayerParams(weight=weight, bias=bias)

model_params = BnnParams(bnn_layer_params=[layer_params])

feature_config = FeaturesConfig(n_features=n_features)

update_method = "VI"

update_kwargs = {"num_steps": 100, "batch_size": 128, "optimizer_type": "adam"}

actions = {

"a1": BayesianNeuralNetwork(

model_params=model_params,

feature_config=feature_config,

update_method=update_method,

update_kwargs=update_kwargs,

),

"a2": BayesianNeuralNetwork(

model_params=model_params,

feature_config=feature_config,

update_method=update_method,

update_kwargs=update_kwargs,

),

}

We can now init the bandit given the mapping of actions \(a_i\) to their model.

[5]:

# init contextual Multi-Armed Bandit model

cmab = CmabBernoulli(actions=actions)

The predict function below returns the action selected by the bandit at time \(t\): \(a_t = argmax_k P(r=1|\beta_k, x_t)\). The bandit selects one action per each sample of the contect matrix \(X\).

[6]:

# predict action

pred_actions, _, _ = cmab.predict(X)

print("Recommended action: {}".format(pred_actions[:10]))

Recommended action: ['a2', 'a1', 'a1', 'a1', 'a2', 'a1', 'a1', 'a2', 'a2', 'a2']

Now, we observe the rewards and the context from the environment. In this example rewards and the context are randomly simulated.

[7]:

# simulate reward from environment

simulated_rewards = np.random.randint(2, size=n_samples).tolist()

print("Simulated rewards: {}".format(simulated_rewards[:10]))

Simulated rewards: [1, 0, 0, 0, 1, 0, 1, 1, 1, 0]

Finally, we update the model providing per each action sample: (i) its context \(x_t\) (ii) the action \(a_t\) selected by the bandit, (iii) the corresponding reward \(r_t\).

[8]:

# update model

cmab.update(context=X, actions=pred_actions, rewards=simulated_rewards)